- For Firefox, open the same menu and choose “Save Page As.”. Third-Party Options. Linux, and Android) or SiteSucker (for macOS and iOS). These programs can download entire website.

- SiteSucker can be used to make local copies of Web sites. By default, SiteSucker 'localizes' the files it downloads, allowing you to browse a site offline, but it can also download sites without.

- This application enables the saving of a web page as one HTML file that is compatible with all browsers. The page that is saved is an accurate copy of the original. The application has the same features for Chrome and Firefox. Users can set the location of the saved files; A saved file could have some predefined fields by default.

For simplicity’s sake, your easiest option on a Mac is to install Chrome or Firefox for the purposes of downloading all the images from a site, and use the add-ons those browsers have available.

15,655 downloadsUpdated: September 8, 2020Shareware

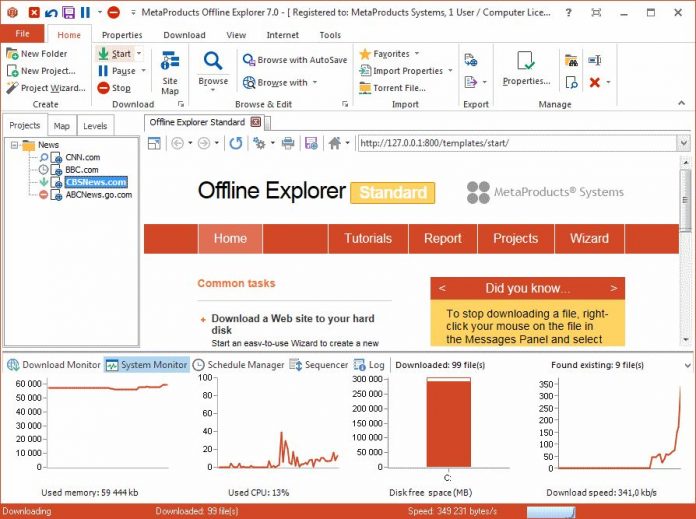

A lightweight and user-friendly application that provides the tools one needs to easily download entire websites from the Internet

What's new in SiteSucker 3.2.4:

- Fixed some problems with SiteSucker's built-in browser.

SiteSucker is a straightforward macOS application designed to help you download websites and asynchronously copy the sites; web page, backgrounds, photos, videos and other files to your Mac’s hard disk.

Website downloader

In other words, you can use SiteSucker to effortlessly duplicate a site’s directory structure and store all the required data with just a few mouse clicks. You only have to type in or paste the URL, hit return and let SiteSucker do the hard work for you.

Sitesucker Options For Firefox Browser

SiteSucker features an intuitive interface that enables you to start, pause or stop the download process, check the logs, open files and folders and monitor the queue list.

What is more, the History drop down menu helps you view the recently downloaded websites while the Queue button helps you hide or show the Queue slide sheet.

Simple and clean interface

During the downloading process you can view the number of downloaded files and compare it with the remaining files, check the number of encountered errors and even skip unwanted files.

By default, SiteSucker “localizes” the downloaded files and, as a result, allows you to browse the website offline. However, you can configure SiteSucker to download sites without making modifications.

Settings Manager

Moreover, SiteSucker comes with support for multiple user settings that you can handle, edit and access from the Settings Manager window.

The Settings slide sheet helps you set the default download folder, suppress login dialog, ignore robot exclusions, enable the desired logs, limit the download speed and file size, filter the downloaded files and exclude paths.

By accessing the Preferences window, you can specify the source of bookmarks, set the number of connection for new documents and configure SiteSucker to notify you once or multiple times when all downloads are complete.

Filed under

Download Hubs

SiteSucker is part of these download collections: Offline Browsers

SiteSucker was reviewed by George Popescu4.0/5

LIMITATIONS IN THE UNREGISTERED VERSION

- If a link is specified in a different tag, SiteSucker will not see it.

- SiteSucker totally ignores JavaScript. Any link specified within JavaScript will not be seen by SiteSucker and will not be downloaded. (If the Log Warnings option is on in the download settings, SiteSucker will include a warning in the log file for any page that uses JavaScript.)

- SiteSucker scans Flash (.swf) files for embedded plain text links, but it can only detect links to files that have one of the following extensions: html, swf, mp3, sit, zip, mov, gif, jpg, png, doc, or txt. SiteSucker also scans QuickTime movies (.mov) for URLs to alternate movies. SiteSucker cannot localize Flash files or QuickTime movies, and SiteSucker does not examine other media files for embedded links.

- By default, SiteSucker honors robots.txt exclusions and the Robots META tag. Therefore, any directories or pages disallowed by robot exclusions will not be downloaded by SiteSucker. This behavior, however, can be overridden with the Ignore Robot Exclusions setting under the Advanced tab in the download settings.

- 64-bit processor

SiteSucker 3.2.4

add to watchlistsend us an update 6 screenshots:

- runs on:

- macOS 10.14 or later (Intel only)

- file size:

- 1.9 MB

- filename:

- SiteSucker_2.4.6.dmg

- main category:

- Internet Utilities

- developer:

- visit homepage

top alternatives FREE

top alternatives PAID

There are many reasons why you should consider downloading entire websites. Not all websites remain up for the rest of their lives. Sometimes, when websites are not profitable or when the developer loses interest in the project, (s)he takes the website down along with all the amazing content found there. There are still parts of the world where the Internet is not available at all times or where people do not have access to Internet 24×7. Offline access to websites can be a boon to these people.

Either way, it is a good idea to save important websites with valuable data offline so that you can refer to it whenever you want. It is also a time saver. You won’t need an Internet connection and never have to worry about the website shutting down. There are many software and web services that will let you download websites for offline browsing.

Let’s take a look at them below.

Also Read:Comparing 4 best Offline Maps Apps for Smartphones

Download Entire Website

1. HTTrack

This is probably one of the oldest worldwide web downloader available for the Windows platform. There is no web or mobile app version available primarily because, in those days, Windows was the most commonly used platform. The UI is dated but the features are powerful and it still works like a charm. Licensed under GPL as freeware, this open source website downloader has a light footprint.

You can download all webpages including files and images with all the links remapped and intact. Once you open an individual page, you can navigate the entire website in your browser, offline, by following the link structure. What I like about HTTrack is that it allows me to download only the part that is updated recently to my hard drive so I don’t have to download everything all over again. It comes with scan rules using which you can include or exclude file types, webpages, and links.

Pros:

- Free

- Open source

- Scan rules

Cons:

- Bland UI

2. SurfOnline

SurfOnline is another Windows-only software that you can use to download websites for offline use however it is not free. Instead of opening webpages in a browser like Chrome, you can browse downloaded pages right inside SurfOnline. Like HTTrack, there are rules to download file types however it is very limited. You can only select media type and not file type.

You can download up to 100 files simultaneously however the total number cannot exceed 400,000 files per project. On the plus side, you can also download password protected files and webpages. SurfOnline price begins at $39.95 and goes up to $120.

Pros:

- Scan rules

- CHM file support

- Write to CD

- Built-in browser

- Download password-protected pages

Cons:

- UI is dated

- Not free

- Limited scan rules

3. Website eXtractor

Another software to download websites that comes with its own browser. Frankly, I would like to stick with Chrome or something like Firefox. Anyway, Website eXtractor looks and works pretty similar to how the previous two website downloader we discussed. You can omit or include files based on links, name, media type, and also file type. There is also an option to download files, or not, based on directory.

One feature I like is the ability to search for files based on file extension which can save you a lot of time if you are looking for a particular file type like eBooks. The description says that it comes with a DB maker which is useful for moving websites to a new server but in my personal experience, there are far better tools available for that task.

The free version is limited to downloading 10,000 files after which it will cost you $29.95.

Pros: Rapidweaver 6 2.

- Built-in browser

- Database maker

- Search by file type

- Scan rules

Cons:

- Not free

- Basic UI

Also Read:Which is the best free offline dictionary for Android

4. Getleft

Sitesucker Options For Firefox Free

Getleft has a better and more modern UI when compared to the above website downloader software. It comes with some handy keyboard shortcuts which regular users would appreciate. Getleft is a free and open source software and pretty much stranded when it comes to development.

There is no support for secure sites (https) however you can set rules for downloading file types.

Pros:

- Open source

Cons:

- No development

5. SiteSucker

SiteSucker is the first macOS website downloader software. It ain’t pretty to look at but that is not why you are using a site downloader anyway. I am not sure whether it is the restrictive nature of Apple’s ecosystem or the developer wasn’t thinking ahead, but SiteSucker lacks key features like search and scan rules.

This means there is no way to tell the software what you want to download and what needs to be left alone. Just enter the site URL and hit Start to begin the download process. On the plus side, there is an option to translate downloaded materials into different languages. SiteSucker will cost you $4.99.

Pros:

- Language translator

Cons:

- No scan rules

- No search

6. Cyotek Webcopy

Cyotek Webcopy is another software to download websites to access offline. You can define whether you want to download all the webpages or just parts of it. Unfortunately, there is no way to download files based on type like images, videos, and so on.

Cyotek Webcopy uses scan rules to determine which part of the website you want to scan and download and which part to omit. For example, tags, archives, and so on. The tool is free to download and use and is supported by donations only. There are no ads.

7. Dumps (Wikipedia)

Wikipedia is a good source of information and if you know your way around, and follow the source of the information on the page, you can overcome some of its limitations. There is no need to use a website ripper or downloader get Wikipedia pages on your hard drive. Wikipedia itself offers Dumps.

These dumps are available in different formats including HTML, XML, and DVDs. Depending on your need, you can go ahead and download these files, or dumps, and access them offline. Note that Wikipedia has specifically requested users to not use web crawlers.

8. Teleport Pro

Most website downloaders/ rippers/crawlers are good at what they do until the number of requests exceeds beyond a certain number. If you are looking to crawl and download a big site with hundreds and thousands of pages, you will need a more powerful and stable software like Teleport Pro.

Priced $49.95, Teleport Pro is a high-speed website crawler and downloader with support for password-protected sites. You can search, filter, and download files based on the file type and keywords which can be a real time saver. Most web crawlers and downloaders do not support javascript which is used in a lot of sites. Teleport will handle it easily.

Pros:

- Javascript support

- Handle large sites

- Advanced scan rules

- FTP support

Cons:

- None

9. Offline Pages Pro

This is an iOS app for iPhone and iPad users who are soon traveling to a region where Internet connectivity is going to be a luxury. Keeping this thought in mind, you can download and use Offline Pages Pro for $9.99, rather on the expensive side, to browse webpages offline.

The idea is that you can surf your favorite sites even when you are on a flight. The app works as advertised but do not expect to download large websites. In my opinion, it is better suited for small websites or a few webpages that you really need offline.

10. Wget

Wget (pronounced W get) is a command line utility for downloading websites. Remember the hacking scene from movie The Social Network, where Mark Zuckerberg downloads the pictures for his website Facemash? Yes, he used the tool Wget. It is available for Mac, Windows, and Linux.

Unlike other software. What makes Wget different from another download in this list, is that it not only lets you download websites, but you can also download YouTube video, MP3s from a website, or even download files that are behind a login page. That said, since it’s a command line tool, you will need to need some terminal expertise to use it. A simple Google search should do.

For example, the command – ‘wget www.example.com’ will download only the home page of the website. However, if you want the exact mirror of the website, include all the internal links and images, you can use the following command.

wget -m www.example.com

Pros:

- Available for Windows, Mac, and Linux

- Free and Open Source

- Download almost everything

Cons:

- Needs a bit knowledge of command line

Wrapping Up: Download Entire Website

These are some of the best tools and apps to download websites for offline use. You can open these sites in Chrome, just like regular online sites, but without an active Internet connection. I would recommend HTTrack if you are looking for a free tool and Teleport Pro if you can cough up some dollars. Also, the latter is more suitable for heavy users who are into research and work with data day in day out. Wget is also another good option if you feel comfortable with command lines